Spring Batch to the rescue

Recently I had to do something that wasn’t exactly in my wheelhouse. It also was kind of a one-off thing. I had to provide an initial load. In this case it meant that I would receive a huge csv file and somehow had to turn it into one big XML file. And if that wasn’t bad enough… They needed the XML file yesterday! After a quick Google search there it was… Spring Batch! Initially I thought this is overkill for a one-off type of thing. But nevertheless I decided to give it a shot. And guess what? Within a few hours I had cooked up a pretty good solution. So not bad at all! In this blog I am going to show you how easy it is to build a Spring Batch component (backed up by Spring Boot) that will transform a csv file into an XML file.

The objective

The objective sounds simple. I have a csv file which could look something like this:

711 Ocean Drive;Joseph M. Newman;Crime drama;Columbia

Abbott and Costello in the Foreign Legion;Charles Lamont;Comedy;Universal

and somehow I have to turn this csv file into this:

<?xml version="1.0" encoding="UTF-8"?>

<Movies>

<Movie>

<Title>711 Ocean Drive</Title>

<Director>Joseph M. Newman</Director>

<Genre>Crime drama</Genre>

<Remark>Columbia</Remark>

</Movie>

<Movie>

<Title>Abbott and Costello in the Foreign Legion</Title>

<Director>Charles Lamont</Director>

<Genre>Comedy</Genre>

<Remark>Universal</Remark>

</Movie>

</Movies> Overview

Spring Batch to the rescue! To make this work the Spring Batch application needs these things:

- an ItemReader

- an ItemProcessor

- an ItemWriter

- some configuration to glue it all together (Job Launcher, Job Repository, Job & Step)

Maven dependencies

But first we have to import some Maven dependencies.

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-batch</artifactId>

</dependency>

<dependency>

<groupId>org.hsqldb</groupId>

<artifactId>hsqldb</artifactId>

<version>${h2.version}</version>

<scope>runtime</scope>

</dependency>The most important dependencies are the spring-boot-starter-batch for our out of the box batch functionality and the hsqldb for storing all the job information and other non-interesting stuff that Spring needs to execute the job.

For the full list of dependencies I suggest checking out the project from my GitHub. You can find the link to my project at the end of this blog.

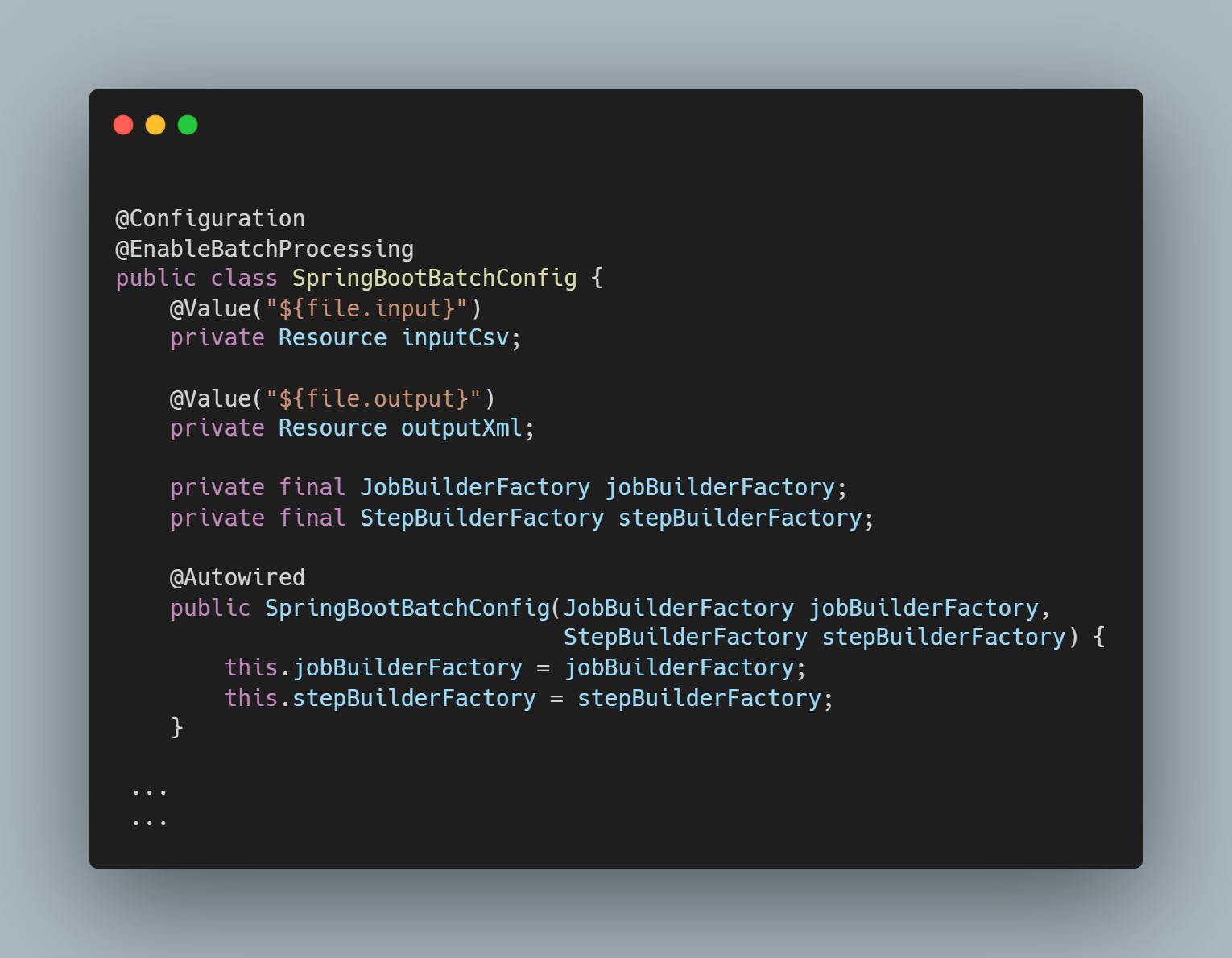

Spring Boot batch config

First up is the configuration. We create a configuration class and enable the batch mechanism by using the @EnableBatchProcessing annotation. This is an important little thing to do because if you don’t place the annotation on top of that class the whole thing will not work!

In this configuration class we define the input file (our csv) and output file (the XML). Also we have to inject the JobBuilderFactory and StepBuilderFactory. We are going to use these factories later on to configure our job.

Item reader

The next thing we need to do is create the Item Reader. The Reader will, as you might have guessed already, read the csv file and store the records for further processing. For storing we need to have a DTO (Data Transfer Object) so we will first create that one. Processing is on a per record basis, so we just need to model the record only (not the whole csv).

Nothing fancy, nothing exciting… just a DTO.

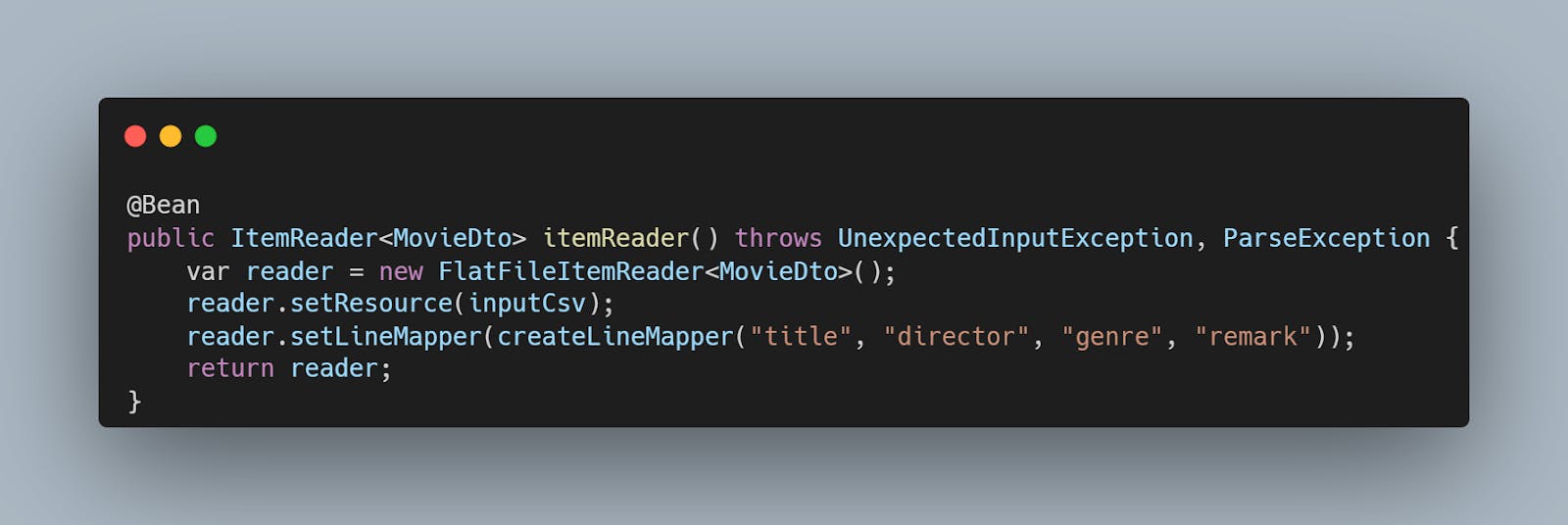

Now it’s time to implement the reader. This will be a bean in the SpringBootBatchConfig class. It goes too far to put all the code in here so I suggest checking out the code from my GitHub and see what it exactly does and what it is made of but here is the snippet for my ItemReader.

Two things to note. This reader needs the input csv file so we have to set the Resource and it also needs a mapper for mapping the csv data to the Movie DTO.

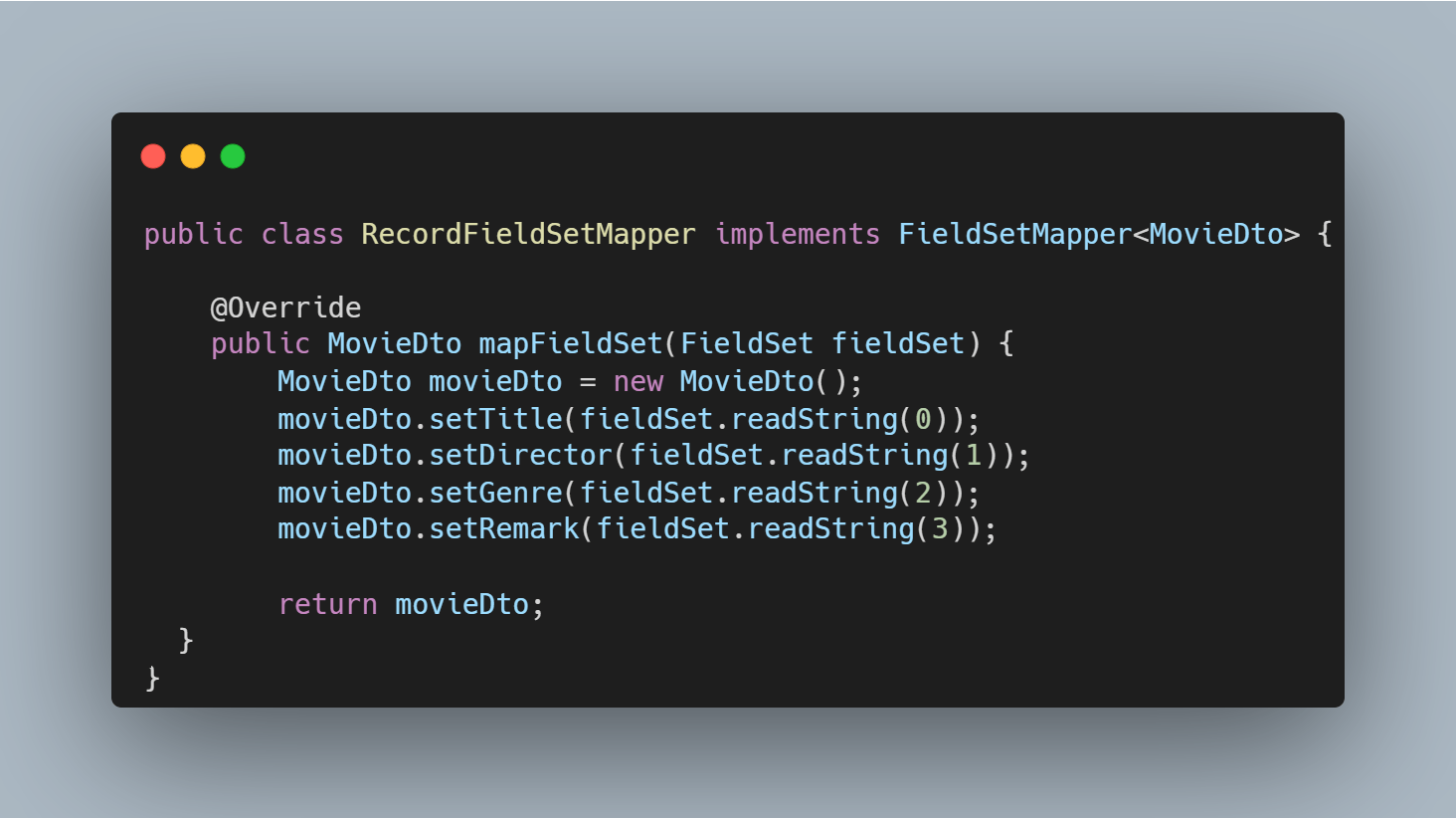

Creating the mapper is as easy as implementing the Spring Batch FieldSetMapper interface and mapping the fields of the csv into the DTO.

We can simply read each field in the csv by using the readString method which accepts the index of the field you want. readString(0) for reading the first field of the record, readString(1) for the second field and so on.

Item processor

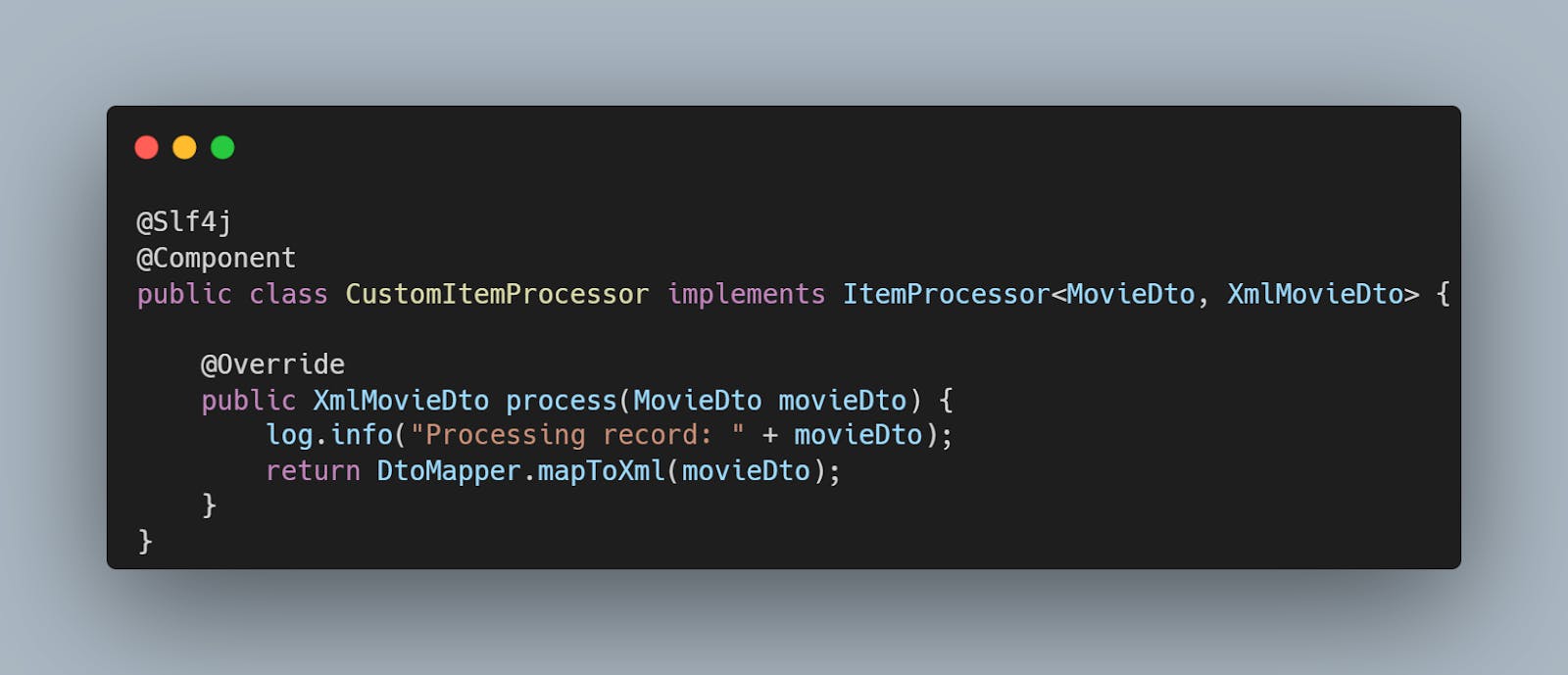

Reader… Implemented! Next up is the Item processor. The processor will execute for each record in our csv. ItemProcessor is a Spring Batch interface. All the processor really does is accept an object from the ItemReader and pass it on to the ItemWriter.

We only need to implement the processing logic which is basically mapping the Movie DTO (which was used during reading) to another DTO which represents the XML “record” (or XML node to be more precise).

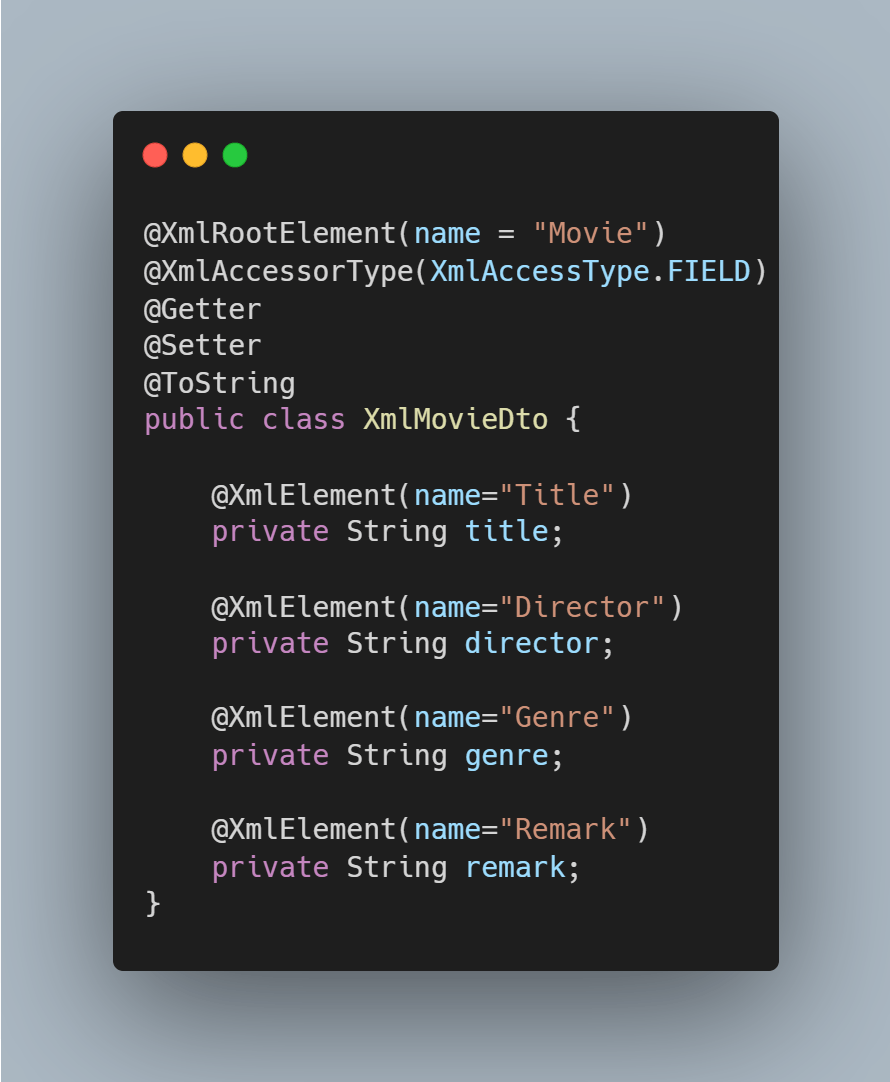

For completeness sake, the XmlMovieDTO.

The XmlMovieDTO is annotated with @XmlRootElement to define the name of the movie node that will be used in the XML file. Because we annotate the fields with @XmlElement (and not the getters) we also have to annotate the class with @XmlAccessorType(XmlAccessType.FIELD). If you don’t do that then your application will complain that it has two properties of the same name and you are treated to several IllegalAnnotation exceptions!

And that’s it for the processor. Movie in… XmlMovie out.

Item writer

Almost there. We just need to implement the writer and do some configuration and we are done. But first things first. Let’s implement the writer. The writer is also implemented as a bean in the SpringBootBatchConfig class.

Here we have to create a StaxEventWriter, which is an XML writer. It needs a marshaller for converting the XmlMovieDTO object into actual XML, a root tag name for the root element/node of the XML and the location (Resource) where the XML output file is going to be placed.

Let's glue it all together

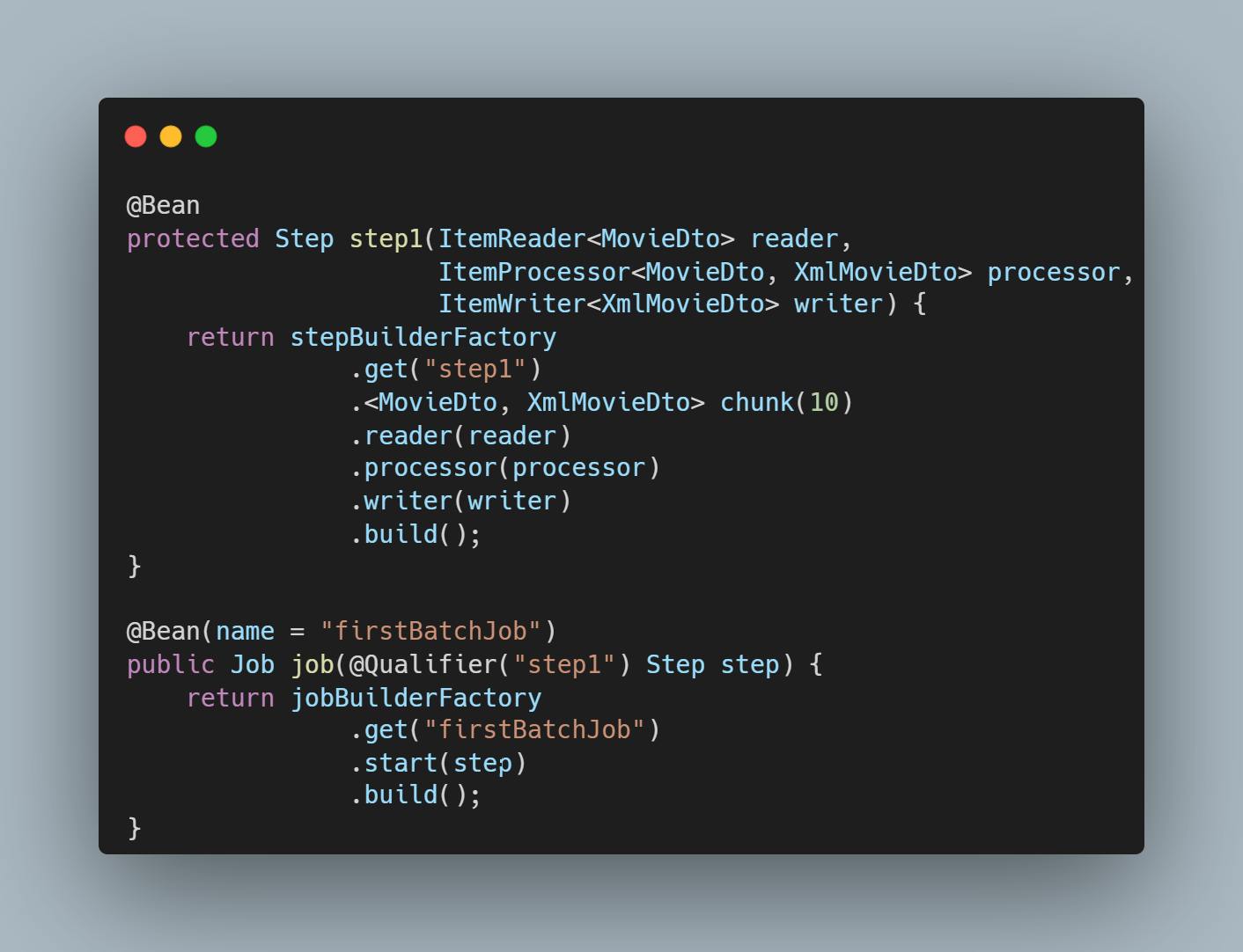

To tie all of the components together and make it work we have to configure a job. A job can have several steps. In our case we just need one step in the job.

When you look at the step method you see that this is the place where it all comes together. The reader, the processor and the writer. And that is all! Just run the application and your csv file will be turned into an XML file in no time.

Did you notice that I didn’t even talk about the Job Repository and the Job Launcher that were shown in the overview picture? That’s because I don’t have to. Spring Batch takes care of all the low-level boring stuff and you can focus on the actual business logic.

The full working example can be found on my GitHub:

https://github.com/vlouisa/spring-batch-demo/tree/master

As a bonus I added a version of the application that can transform the same input file into files with a different output format (JSON & XML). Switching between formats is handled in the application.properties file. Just set the correct profile and there you go!

The multiple output format version can be found over here:

https://github.com/vlouisa/spring-batch-demo/tree/multiple-output-format